AI-native insurance operations is the category every platform in this space now claims. The harder question — the one that separates a demo from production — is the architecture underneath it. Task-native insurance operations is the answer COVU is putting in the market. On March 10, 2026, COVU OS went into live production. The first production readout is in.

This is not a launch announcement. It is an operator readout.

If you run an agency, sit on an agency board, or allocate capital to insurance distribution, the question you care about is simple. When service work moves to a task-native platform, do the unit economics actually improve, or does the model introduce new drag? Here is what the production data say since launch.

What task-native insurance operations actually means

AI-native describes what the platform is — built from the ground up around AI in service, not a legacy stack with AI bolted on. Task-native describes how it works. The two ride together. Most platforms in the AI-native category route tickets or conversations to bots. Task-native goes one level deeper.

Legacy agency ops route tickets to people. Task-native routes atomic tasks. Every inbound — email, call, voicemail — becomes a service. Every service expands into a playbook of discrete tasks. Each task routes independently to whichever resource is best suited, AI or human, based on skill, line of business, license, and customer continuity. A Certificate of Insurance service runs six tasks. A new customer acquisition runs sixteen. The platform tracks every step from open to close. That is the unlock. Not that work is broken into tasks — every ops system does that — but that each task is routed independently. The task is the atomic unit. The ticket is the shell.

That is COVU OS — an operating platform for service, built inside the AI-native services in insurance category on a task-native architecture.

The architecture matters because it determines what you can measure. Ticket-native ops measure tickets. Task-native ops measure every task inside every ticket, every routing decision, every hand raise, every delay. That granularity is what makes the platform improve week over week — and what makes the numbers below possible.

The headline metrics since launch

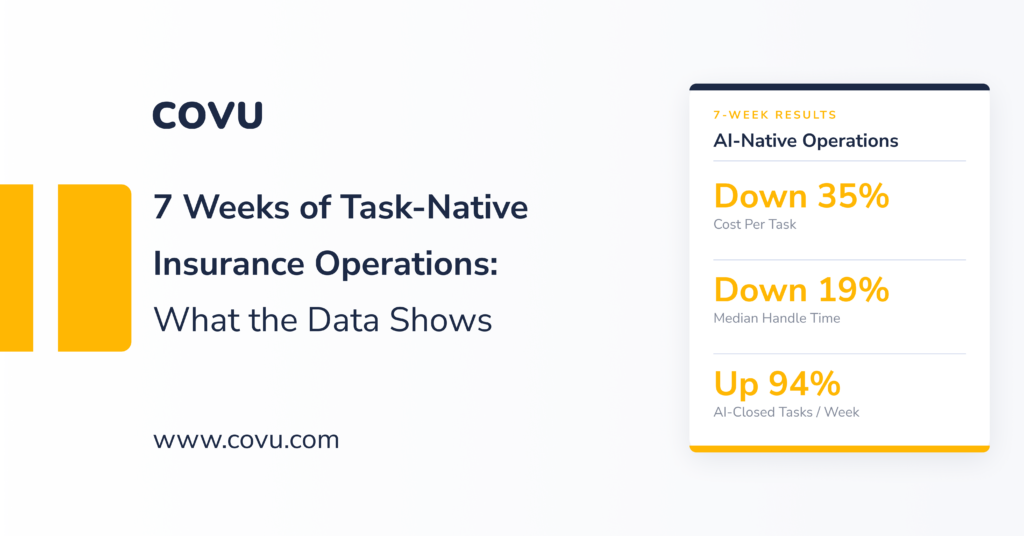

The four metrics that matter are all moving in the right direction, and a fifth — AI-closed tasks — has emerged as the most important signal in the data.

Hand raises are down 41%. A hand raise is when a human agent hits a blocker mid-task and has to escalate or reassign. They have dropped every week since launch — the clearest indicator that the system is getting out of its own way.

Median handle time is down 19%. The time a human agent spends actively working a task has fallen from roughly 5.1 minutes at launch to around 4.1 minutes now. AI agents are excluded from this number — this is human productivity alone, improving because context is pre-loaded, systems are connected, and fewer tasks hit friction.

AI-closed tasks are up 94%. AI-closed tasks — tasks completed with zero human agent touch — have nearly doubled since launch and now account for roughly 52% of all completed tasks each week. The system processes more than 9,000 of them weekly. This is the metric that most directly validates task-native architecture, and it is discussed in detail in the next section.

Cost per task is down 35%. Labor cost per task touched has fallen meaningfully since launch. This is the unit economic that compounds. At scale, a 35% reduction in cost per service touch shows up in EBITDA within two quarters.

Weekly throughput is over 40,000 AI-routed tasks. That is not a projection. It is the live weekly volume with AI classifying and routing inbound at scale.

| Metric | Direction | Change since launch |

|---|---|---|

| Hand raises / day | ↓ | −41% |

| Median handle time | ↓ | −19% |

| AI-closed tasks / week | ↑ | +94% |

| Cost per task | ↓ | −35% |

| Weekly tasks (AI-routed) | — | 40,000+ |

The direction and slope both matter. Hand raises have fallen every single week since launch — a consistent decline, not a one-week dip. That is the profile of a platform stabilizing, not oscillating.

How AI compounds inside a task-native architecture

The 40,000-task weekly throughput is where the architecture earns its keep. AI operates on two layers inside COVU OS, and each one is only possible because work is atomized into tasks to begin with.

Layer 1: AI triage. Across the inbound email channel, the AI triage layer classifies every message before it reaches a human. Of the inbound COVU OS processes, the AI correctly identifies roughly 68% as irrelevant — carrier notifications, spam, internal CCs, automated renewals, and the rest of the noise that clogs a traditional agency inbox. They never hit a human queue. The remaining 32% is real work, split across new service requests, follow-ups on existing services, and informational queries. The triage layer currently runs at a 98% automatic routing success rate — classifying and actioning the overwhelming majority of inbound with no human intervention required.

Layer 2: AI-closed tasks. Once work enters the system as tasks, AI does not just route it — it closes a meaningful share of it. Roughly 52% of all completed tasks each week are now fully AI-closed, with no human agent touching them. That share has grown 94% since launch. An AI agent cannot close a “ticket” the way ticket-native ops define it, because a ticket is a compound workflow with many steps of varying nature. But AI can close an atomic task. Task-native architecture is what makes AI end-to-end task completion possible — the task is small enough, well-defined enough, and standalone enough for AI to own from intake to resolution.

The combined effect reframes the P&L. Labor cost in a service organization is a function of volume and handle time. If a task-native platform routes each inbound unit independently — removing 68% of volume before it touches a human, reducing handle time on the remainder by 19%, and then fully closing more than half of the actual work with no human touch at all — the output is a service function that scales without linear headcount. That is the operational leverage investors underwrite in the AI-native category, and what task-native architecture is built to deliver.

The second-order effect matters even more. When noise is filtered at triage and AI closes the standard-playbook work, the human queue becomes denser with genuinely judgment-dependent work. Every task a human touches is a task that actually requires human skill. For an agency processing thousands of inbound contacts per week, the math compounds fast: a team sized for total inbound volume is dramatically over-resourced for the actionable subset, and further over-resourced once AI handles the routine tasks.

The platform also closes the visibility gap that plagues legacy service ops. When irrelevant inbound is indistinguishable from real work, what is actually slipping is impossible to see. With AI triage in place, AI closing a measurable share of tasks, and observability instrumented across the full task flow, the operator knows exactly where capacity is going. At scale, that is the difference between capacity planning by headcount and capacity planning by throughput.

What this tells you about the category

For an agency principal or an investor looking at distribution assets, the production data point to four things worth holding onto.

The cost curve is real. A 35% cost-per-task reduction since launch, with the primary optimization lever — routing — still being refined, is the kind of operating leverage that justifies the platform thesis. That number lives in a weekly funnel of 40,000 tasks, not a vendor deck.

The volume filter is the hidden unlock. Every incumbent benchmark for agency service productivity assumes a human touches all of the inbound. A task-native architecture — where every task routes on its own merits — removes roughly two-thirds of that inbound before it ever reaches the queue. That single fact makes most legacy capacity planning obsolete.

AI-close is the compounding layer. Beyond filtering noise, AI now fully closes more than half of the actual work that enters the system. This is only structurally possible because the work is atomized — AI agents can own a well-defined task end-to-end in a way they cannot own a compound ticket. As playbooks expand to more service types, more of what an agency processes moves into the AI-closed column, and the service function scales further without scaling headcount.

The sequencing signals whether the team is operating with discipline. COVU OS is not trying to ship everything at once. Stability came first, then friction reduction, then routing optimization, now expanded capability. That is the right order. And it is the best predictor of where the platform will be at week 50. You can explore COVU OS and book a demo here.

The platform is reading its own signals and improving every week. For agencies and investors evaluating the AI-native category, task-native is the architectural signal worth tracking.

Ready to see what task-native operations look like against your own book? See how established agencies hand off operations to COVU →